I like experimenting with new builds pretty frequently in my work. I find that these projects generally take one of two shapes:

Concepts of a Plan

These tend to be spur of the moment ideas where I want to experiment with an atomic unit of software.

Fully Formed Idea

The hallmark is a well defined problem statement, usually something I’ve observed or been asked about multiple times over a few weeks.

Concepts

Often these experiments occur inside pre-existing projects, but I do build them standalone from time to time. I tend to optimize these projects for speed, focusing more on idea validation – a rough draft.

Example

Take our website style guide and build out an interactive landing page where prospects can adjust a usage slider to see the pricing impact of our tool vs a competitor.

Feeding the above prompt to a coding agent will typically elicit some Q&A (i.e. Claude’s AskUserQuestion tool) before it gets to building. I gave Claude a similar prompt to the above example at our SKO last year, during a session on pricing. I was able to share the HTML file and receive feedback from my team within a few minutes.

We didn't use the output file, nor did we use the concept at all. Rather than debating the merits of the concept and whether a visual was even needed, my colleagues and I quickly determined that an interactive price visualizer didn’t make sense for the message we wanted to convey.

To us, this was a valuable experience and a better use of time than a meeting or endless slacks.

Fully Formed

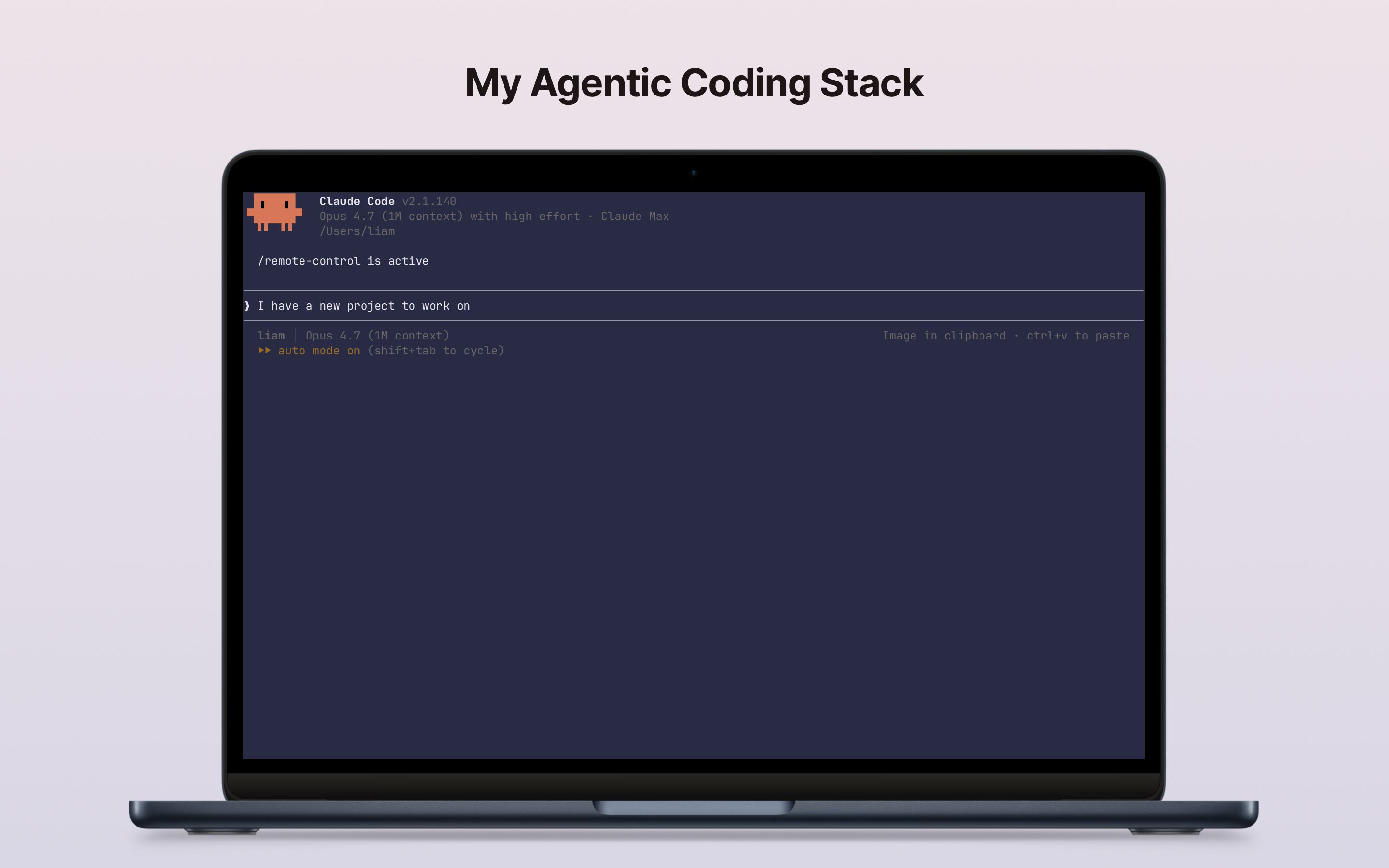

When starting a greenfield project, I have a particular stack that I lean towards. The LLMs are very comfortable working with some of these tools, while others require more steering (usually via skills and explicit prompting). Through lots of experimentation and personal side projects, I've found that the trade-off is worth it. By avoiding shortcuts, I'm able to create systems that are easier to maintain long term.

On skills:

As a general rule, I try to minimize the number of skills I give my agents. When I do need skills, I find that Vercel’s excellent skills.sh is the most convenient aggregator for me. I’m quickly able to discover and install skills. It’s a zero-effort addition to my ever-evolving process. If you’re not using it, I recommend you check it out.

The Basics

Ninety-nine percent of my projects use:

Language: TypeScript

Framework: Next.js (full-stack, runs on Node.js)

UI: React (what Next.js is built on)

Components: shadcn/ui

Styling: Tailwind CSS (what shadcn is built on)

I generally chuck these in a list and tell the agent to use the latest publicly available version of each. For shadcn, I vary between Radix and Base UI. I don’t think it has a material impact when you’re first starting out, just pick one or let the LLM pick.

Skills

There are two skills that I've started using in the past month which I find immensely helpful:

Both are created by the excellent Matt Pocock, I’d recommend checking out his YouTube channel for excellent agentic coding frameworks and tips. In short, grill-me initiates an interview session where the LLM asks you a ton of questions on the project or feature you're looking to implement. Then, you invoke to-prd which takes the context you created in the chat and writes a product requirements doc that another coding agent can execute on.

This simple one-two approach helps you uncover gaps in your logic and documents everything in a way that the coding agents can understand and build upon.

The rest

IDE: Zed

Auth: Better Auth1 with Google OAuth

Tasks/Agents: Trigger.dev1

LLM Observability: Galileo

Most of these are pretty self-explanatory, but there are some deliberate trade-offs I've made here.

On the hosting front, I find Vercel and Railway equally easy to use. The trade-off comes down to cost and persistence. Vercel is a serverless architecture, so instances spin up and spin down. If you need a service that is persistently running, Railway is a great option. Otherwise I think Vercel is simpler to use, especially for those of us coming into the agentic development world from without engineering backgrounds. Both are well documented by the LLMs and easy to work with without any skills required.

With hosting sorted, authentication is the next most important item to address. I've tried various different auth libraries. While they all work, I found that BetterAuth is the simplest for me to get started, and works especially well if I'm creating an internal business app. I will almost always opt with the following BetterAuth setup: Internal Google OAuth application + sign in with Google as the only option. This keeps the apps secure, makes it much harder to accidentally or deliberately exfiltrate data outside of the organization. If I were to build something that required billing or multi-tenancy, I would look at Clerk or WorkOS.

Rather than using the OpenAI or Anthropic SDKs, I like to use Vercel AI Gateway because it gives me the most flexibility (switching LLMs is a breeze) and centralizes billing with my hosting (if I’m hosting the project on Vercel).

To run those AI functions, Vercel recently announced their Workflow feature. It competes with my preferred tool, Trigger.dev. I'm still wrapping my head around the best ways to use Trigger so I don't want to jump ship at this time. The two main advantages I see:

Long-running agents executing my TypeScript code on Trigger's infrastructure

Robust logging so I can easily debug when things go awry

Speaking of logging, I think even simple personal projects or basic internal apps would benefit greatly from LLM observability. I'm biased because I work at Galileo, but I genuinely think the platform is easy to use and really helpful when setting up your project. Think of it like this: your project is up and running and you're getting output from your LLM steps or your agents. How do you know the right tool calls are happening, that your agent is adhering to the prompt instructions, or whether the output is relevant to the context it received? You could be flying blind. With a few simple tweaks powered by an observability tool, you can drastically improve your app's performance.

Finally, the big one, your database. This is where I think LLMs are setting a lot of people up for failure.

Through luck or ingenious strategy, Supabase has become the default option that LLMs recommend. I've used Supabase for countless projects and as an introduction to how backend databases work, it was great. But it gets pricey really fast. I also tend to think that the static nature of a SQL database isn't the right approach for most modern AI-powered apps where data structures are fluid.

For example, you may be building a follow-up tool that helps SEs find, prepare, and send documentation that they promised on a demo call. Your data might have a specific shape when you initially create the app, but you'll likely be iterating rapidly with your field team on how the app works and what functions are important. Software development from a GTM Engineering standpoint is less bound by traditional Agile practices, this might mean you're getting input from lots of places all at once and potentially building, testing, and shipping multiple times a day or per week. If you're running a traditional database like Supabase, that means you have to run database migrations for every change to the data shape.

Data shape: Literally the fields in your data. You might set the following as fields from a call or meeting object:

id

participants

transcript

Traditional SQL databases have been around for a while and are well represented in the LLM training data. This means working with them is trivial at first and easy as the project grows. But we have other options.

Inspired by Theo (yes I know he's controversial and can be quite annoying at times), I've started experimenting with Convex. To keep a long story short, Convex lets you skip the data migration and allows you to define the shape of your data in your codebase. This means when you realize that your SE documentation app needs account_id as well as phase_of_the_moon for each meeting object, you simply update your code and the database updates live.

There are lots of other advantages, but I would recommend watching this video if you're interested in learning more. The downside of using Convex is the LLMs get a little lost, especially during the implementation. I found that once you get everything set up, they work a lot better, and the official skills from Convex help make this work a lot faster.

Bottom line, Supabase is overpriced. If you're going to build something quick and dirty or simply prefer SQL databases, I recommend you use Neon. It's what I use whenever I don't need the full power of Convex. If you're curious about how Convex could help, watch the video I linked, check out their website, read some documentation, and experiment on a new project. It's going to take a little bit more time but I think the effort is worth it.

Folder Structure

Lastly, I like to create a docs folder where I store research and plans.

<project-name>/

└── docs/

├── research/

├── plans/

└── completed-plans/

If I'm using the plan mode via the LLMs or the /to-prd skill, I always want to save the markdown of the plan in this folder, then start the chat with a new context. This is also helpful for me if I want to create multiple plans but not execute them all at once. Once I complete a plan, I'll generally put it in a completed-plans folder, although there are valid arguments for throwing the plan away or using a project management tool like Linear to keep track of everything. Their MCP is very good and I've used it in the past, so I may move back to this in the future.

Conclusion

I've spent a lot of time researching and thinking about my agentic coding setup. Coming from the world of sales and marketing, this isn't something we are taught to do for making project plans or creating PowerPoints. But it's something our colleagues on product and engineering teams think about deeply and constantly.

As agentic coding tools get democratized across organizations and functions, there are two ways you can up-level your skills (if you don't have an engineering background):

Practice: Repetition is going to teach you what works and what doesn't, and will set you apart from the competition

Opinion: Think about and have a point of view about the architecture you like to use and why want to build with with it

If you let the agents make all the decisions for you, you're going to get lost pretty quickly, stranded with an app that is hard to maintain and feels like a black box rather than your own creation.

1 I use a setup/config skill provided by the tool creator, to help guide the coding agent